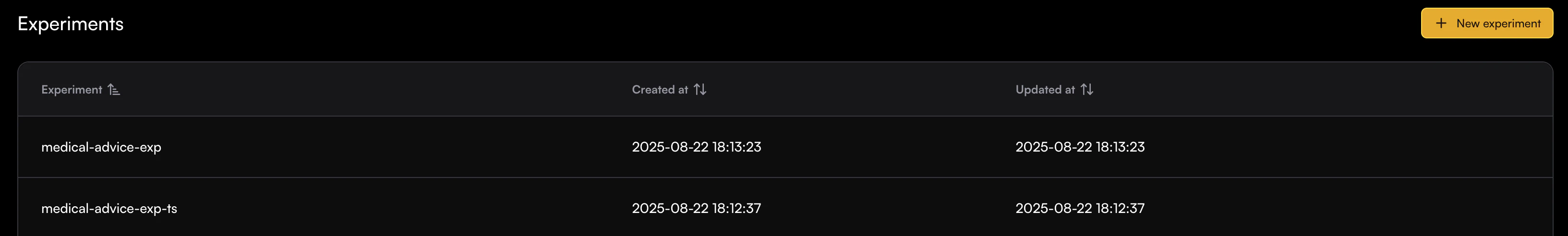

Building reliable LLM applications means knowing whether a new prompt, model, or change of flow actually makes things better.Documentation Index

Fetch the complete documentation index at: https://enrolla-gz-new-docs-for-auto-monitor.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

What You Can Do with Experiments

Run Multiple Evaluators

Execute multiple evaluation checks against your dataset

View Complete Results

See all experiment run outputs in a comprehensive table view with relevant indicators and detailed reasoning

Compare Experiment Runs Results

Run the same experiment across different dataset versions to see how it affects your workflow

Custom Task Pipelines

Add a tailored task to the experiment to create evaluator input. For example: LLM calls, semantic search, etc.